Learn how real-time sensing works, from latency budgets and fusion architectures to IIoT security benchmarks and deployment best practices for professionals.

Real-time sensing: Core mechanics, applications, and benefits

TL;DR:

- Real-time sensing guarantees predictable, deadline-bound data delivery for immediate decision-making.

- System architecture, from sensor fusion to dispatch, is critical to meet strict latency requirements.

- Proper planning of latency budgets and operational factors is essential for effective security and industrial applications.

Most security and industrial professionals assume real-time sensing simply means fast data. It doesn't. Real-time sensing is fundamentally about predictable, deadline-bound sensor data delivery that guarantees functionally usable outputs when decisions cannot wait. A system that delivers results in 50ms every single time outperforms one that occasionally hits 10ms but unpredictably spikes to 200ms. This article breaks down the core mechanics, architectural classifications, operational challenges, and security applications of real-time sensing so you can design, specify, and deploy systems that actually perform when it counts.

Table of Contents

- What is real-time sensing? The core definition and pipeline

- Types, architectures, and latency classifications in real-time sensing

- Operational challenges: Latency budgets, environmental edge cases, and power trade-offs

- Security and IIoT applications: Practical impact and performance benchmarks

- A fresh perspective: What most professionals misunderstand about real-time sensing

- Next steps: Connect with advanced sensing solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Predictable latency is essential | Real-time sensing prioritizes guaranteed, consistent response times over raw speed. |

| Architectures shape capability | System design—centralized vs decentralized, hard vs soft deadlines—determines usability and flexibility. |

| Environmental factors matter | Temperature, interference, and power efficiency all impact real-time sensing reliability. |

| Security benefits are proven | Advanced sensor data enables rapid anomaly detection and threat response in IIoT environments. |

| Hybrid edge-cloud is key | Combining edge and cloud processing maximizes efficiency for demanding industrial and security applications. |

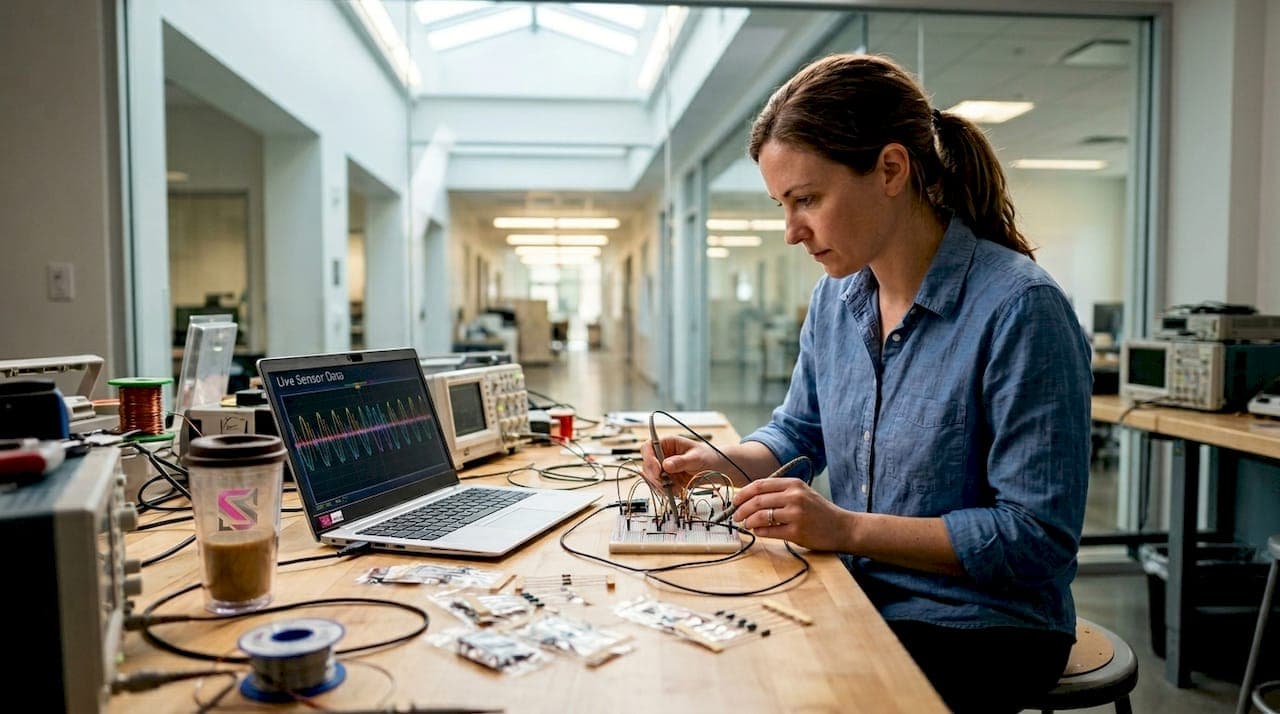

What is real-time sensing? The core definition and pipeline

The formal definition matters here. Real-time sensing is "the continuous acquisition, processing, and analysis of sensor data within strict latency bounds that ensure outputs are functionally usable for immediate decision-making or control in time-critical applications." Notice what that definition does not say: it says nothing about being the fastest possible system. It says outputs must be usable within a guaranteed window.

This distinction separates real-time from near-real-time. Near-real-time systems tolerate latencies measured in seconds or minutes, which is acceptable for human-speed review but useless for automated perimeter breach response or industrial fault isolation. Batch processing sits further back still, analyzing stored data after the fact.

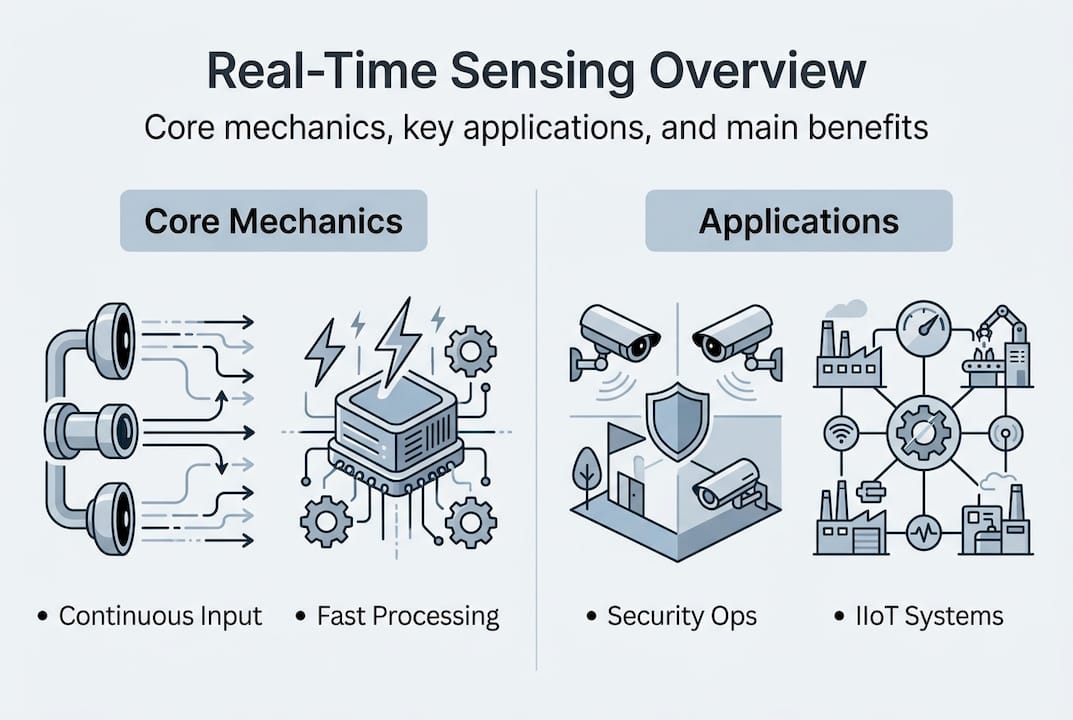

The processing pipeline in a real-time sensing system moves through five distinct stages:

- Acquisition: Raw sensor data is captured at defined sampling rates

- Preprocessing: Noise filtering, signal conditioning, and outlier rejection occur here

- Alignment: Temporal and spatial synchronization using standards like IEEE 1588 Precision Time Protocol (PTP) ensures multi-sensor data is coherent

- Fusion: A fusion kernel, often a Kalman filter or its variants, merges aligned data streams into a unified state estimate

- Dispatch: The processed output is forwarded to decision logic or control systems within the latency budget

Each stage consumes time. The sum of all stage durations must fit inside your system's latency bound. If any stage overruns, the entire pipeline misses its deadline.

"Knowing your latency bound before selecting hardware is more operationally critical than maximizing raw sensor speed."

For security professionals deploying advanced sensor technologies, the practical implication is this: specify your deadline first, then work backward through the pipeline to allocate time budgets per stage. This approach prevents the common mistake of over-investing in fast sensors while under-specifying the fusion and dispatch layers.

Pro Tip: Define your end-to-end latency requirement before selecting any hardware. A Kalman filter tuned for your specific noise profile will consistently outperform a faster but poorly configured alternative.

With foundational concepts clear, let's look at how real-time sensing architectures are categorized.

Types, architectures, and latency classifications in real-time sensing

Real-time systems are classified by deadline sensitivity: hard, soft, and firm. Each classification has direct consequences for system design and failure behavior.

| Classification | Deadline miss behavior | Typical security application |

|---|---|---|

| Hard real-time | System failure or safety breach | Emergency shutdown, intrusion trigger |

| Soft real-time | Graceful degradation, reduced quality | Video analytics, environmental monitoring |

| Firm real-time | Output discarded, no degradation | Access control event logging |

Hard real-time is non-negotiable in safety-critical and perimeter security contexts. Missing a 33ms deadline in a LiDAR-based intrusion detection system is not a minor inconvenience. It is a functional failure.

Architecturally, real-time sensing systems split into two main models:

- Centralized fusion: All sensor streams route to a single processing node. Easier to manage and optimize, but creates a single point of failure and can bottleneck under high sensor counts

- Decentralized fusion: Processing occurs at or near each sensor node before aggregation. More resilient and scalable, but requires careful synchronization to avoid temporal misalignment between nodes

For sensor tech compliance standards in regulated environments, decentralized architectures often provide better audit trails because each node logs its own state independently.

Fusion also operates at three distinct levels:

- Data-level fusion: Raw sensor streams are merged before any feature extraction. Highest fidelity, highest compute demand

- Feature-level fusion: Processed features from individual sensors are combined. Balanced approach for most security deployments

- Decision-level fusion: Independent classification outputs from each sensor are merged. Lowest bandwidth requirement, suitable for distributed edge deployments

A practical latency benchmark: a 30Hz lidar-camera fusion system carries a hard deadline of 33ms per cycle. Every pipeline stage must fit inside that window. Tailored security solutions that match architecture to application requirements consistently outperform generic off-the-shelf configurations.

Understanding types and architectures lays the groundwork for tackling real-world challenges.

Operational challenges: Latency budgets, environmental edge cases, and power trade-offs

Deploying real-time sensing in the field introduces challenges that controlled lab testing rarely surfaces. Environmental interference is the first obstacle. Temperature fluctuations cause sensor drift, particularly in analog signal chains. Electromagnetic interference (EMI) from industrial machinery corrupts wireless sensor transmissions and can introduce jitter that blows latency budgets unpredictably.

The end-to-end latency budget for a 30Hz lidar-camera fusion system breaks down roughly as follows:

| Stage | Typical allocation |

|---|---|

| Sensor acquisition | 3-5ms |

| ADC and signal conditioning | 2-4ms |

| Wireless/wired transmission | 5-10ms |

| OS scheduling jitter | 1-3ms |

| Fusion computation | 8-12ms |

| Dispatch to control layer | 1-2ms |

| Total budget | ~33ms |

Every millisecond counts. OS scheduling jitter alone can consume 3ms in a non-real-time Linux kernel, which is why industrial sensor automation deployments frequently run RTOS (Real-Time Operating System) environments to enforce deterministic scheduling.

For wireless sensor networks, power efficiency directly affects latency consistency. Sensors that duty-cycle aggressively to save battery may introduce variable wake-up delays that corrupt timing. Efficient scheduling protocols like TDMA (Time Division Multiple Access) assign fixed transmission slots, keeping latency predictable without burning excess power.

Calibration is another non-negotiable operational requirement. Sensors drift over time. A camera whose intrinsic parameters have shifted by even a small margin will misalign with a co-located LiDAR, degrading fusion accuracy silently. Scheduled recalibration intervals and in-field validation routines keep systems performing to spec. The security compliance guide outlines calibration documentation requirements for regulated deployments.

The numbered priorities for managing operational challenges are:

- Establish your full end-to-end latency budget before integration begins

- Select transmission media based on EMI exposure at the deployment site

- Use RTOS or real-time kernel patches to eliminate OS jitter

- Implement scheduled recalibration with automated drift detection

- Validate power scheduling protocols against your latency requirements

Pro Tip: Adding redundant sensors improves reliability but always adds latency. Each additional stream requires alignment and fusion time. Build redundancy with a latency impact assessment, not as an afterthought.

With operational challenges mapped out, let's examine how real-time sensing delivers actionable security improvements.

Security and IIoT applications: Practical impact and performance benchmarks

Real-time sensing is the operational backbone of modern security and Industrial IoT (IIoT) deployments. In perimeter security, LiDAR and radar sensors deliver continuous spatial awareness with sub-50ms response times, enabling automated threat classification before a human operator even receives an alert. Vibration sensing on critical infrastructure detects mechanical anomalies early, reducing unplanned downtime through predictive maintenance rather than reactive repair.

On the IIoT security side, real-time sensor data enables anomaly detection at the network and operational technology (OT) edge. The DataSense dataset for IIoT attack scenarios demonstrates that ML models trained on real-time sensor streams achieve high detection rates while maintaining low resource consumption, a critical constraint for edge-deployed hardware with limited compute budgets. Up to 25% model size reduction is achievable through quantization and pruning without significant accuracy loss.

Performance benchmarks from edge inference platforms are instructive. YOLOX achieves 107 FPS versus YOLOv12's 45 FPS on the Jetson AGX Orin platform, with energy consumption of 0.58 J/frame compared to 0.75 J/frame respectively. For real-time security applications where both throughput and energy matter, model selection has direct operational consequences.

Key performance considerations for real-time security deployments:

- Detection latency: Sub-33ms end-to-end for hard real-time threat response

- False positive rate: Directly affects operator fatigue and response credibility

- Energy per inference: Critical for battery-backed and solar-powered edge nodes

- Model update cadence: Threat landscapes evolve; models must be updatable without system downtime

"OT edge deployments that process sensor data locally stop threats before they propagate to enterprise networks, reducing breach impact significantly."

For sensor technology alternatives in constrained deployments, lightweight inference models running on dedicated edge hardware deliver the throughput needed without centralized cloud dependency. Security agencies deploying real-time sensing for critical infrastructure protection benefit from this architecture because it eliminates cloud latency entirely from the threat response loop.

Now that practical impact and benchmarks are clear, let's step back for a fresh perspective on what most professionals overlook about real-time sensing.

A fresh perspective: What most professionals misunderstand about real-time sensing

Here is the uncomfortable truth: most real-time sensing projects fail not because of hardware limitations but because of architectural misunderstanding. Teams chase raw sensor speed while neglecting the fusion and dispatch layers where latency actually accumulates. They add redundant sensors to improve reliability without accounting for the synchronization overhead each additional stream introduces.

The real-time vs fast misconception runs deep. We have seen deployments where engineers specified 1000Hz sampling rates on sensors feeding a 30Hz fusion kernel. The extra samples added preprocessing load without improving output frequency. Predictability was sacrificed for the appearance of speed.

Hybrid edge-cloud architectures represent the practical answer for most industrial automation and security deployments. Hard real-time decisions execute at the edge where latency is sub-50ms. Soft real-time analytics, model retraining, and compliance logging route to cloud infrastructure where compute is elastic. This split respects the latency requirements of each function without forcing every workload into the same constrained edge environment.

The trade-off between accuracy and compute is real and must be managed explicitly. A more accurate fusion model that occasionally misses its deadline is operationally worse than a slightly less accurate model that never does. Always measure both latency consistency and detection accuracy together, not in isolation.

Having explored these expert nuances, here's how advanced solutions can help you take the next step.

Next steps: Connect with advanced sensing solutions

Real-time sensing performance depends on architecture decisions made early in the design process. Getting those decisions right requires validated tools, experienced partners, and solutions built for your specific operational context.

BeyondSensor provides purpose-built resources for professionals ready to move from theory to deployment. Whether you represent a government body or enterprise security operation, solutions for security agencies address the hard real-time requirements of perimeter defense and critical infrastructure monitoring. For integration teams building multi-vendor sensing stacks, solutions for system integrators offer ecosystem matchmaking and compliance validation support. Explore the full catalog of advanced sensing tools to identify the right components for your latency budget and deployment environment.

Frequently asked questions

How does real-time sensing differ from near-real-time or batch processing?

Real-time sensing guarantees predictable responses within milliseconds, while near-real-time tolerates seconds to minutes and batch processing analyzes stored data after the fact, making neither suitable for automated threat response.

What are typical latency budgets for real-time systems?

Safety-critical systems typically require under 50ms end-to-end, with a 33ms budget for 30Hz lidar-camera fusion being a common benchmark across security and industrial applications.

Why is predictability prioritized over raw speed in real-time sensing?

Predictability ensures deadline compliance required by safety and security systems, whereas raw speed that varies unpredictably can cause missed deadlines that constitute functional failures in hard real-time contexts.

How does real-time sensing improve security in industrial IoT?

It enables rapid anomaly detection and automated threat response at the OT edge, with ML models on IIoT datasets achieving high detection rates while maintaining low resource consumption suitable for constrained edge hardware.

What sensors are commonly used for real-time security applications?

LiDAR, radar, and vibration sensors are the primary components in perimeter monitoring and industrial predictive maintenance systems, each selected for its specific detection characteristics and latency profile.

Recommended

- Sensing tools guide: Types, accuracy, and security uses | News | BeyondSensor

- How sensor technology drives smarter industrial automation | News | BeyondSensor

- Security compliance in sensing systems: a step-by-step guide | News | BeyondSensor

- Why tailored security solutions outperform generic systems | News | BeyondSensor

Read More Articles

Top 4 Titan-SG.com Alternatives 2026

Discover 4 titan-sg.com alternatives for sensor-based security solutions and compare their features effectively.

Defining operational efficiency in security: insights & best practices

Learn how to define and measure operational efficiency in security with key metrics, benchmarks, and sensor strategies tailored for Southeast Asia agencies.

Top advantages of sensing solutions for secure facilities

Discover the top advantages of advanced sensing solutions for security and operational efficiency in Southeast Asian industrial and smart infrastructure facilities.

Top intelligent sensing technologies to boost facility security

Discover top intelligent sensing technologies for facility security in 2026. Compare AI video analytics, environmental sensors, and access control solutions.

Let's Build YourSecurity Ecosystem.

Whether you're a System Integrator, Solution Provider, or an End-User looking for trusted advisory, our team is ready to help you navigate the BeyondSensor landscape.

Direct Advisory

Connect with our regional experts for tailored solutioning.